1. Intelligent Test Case Generation

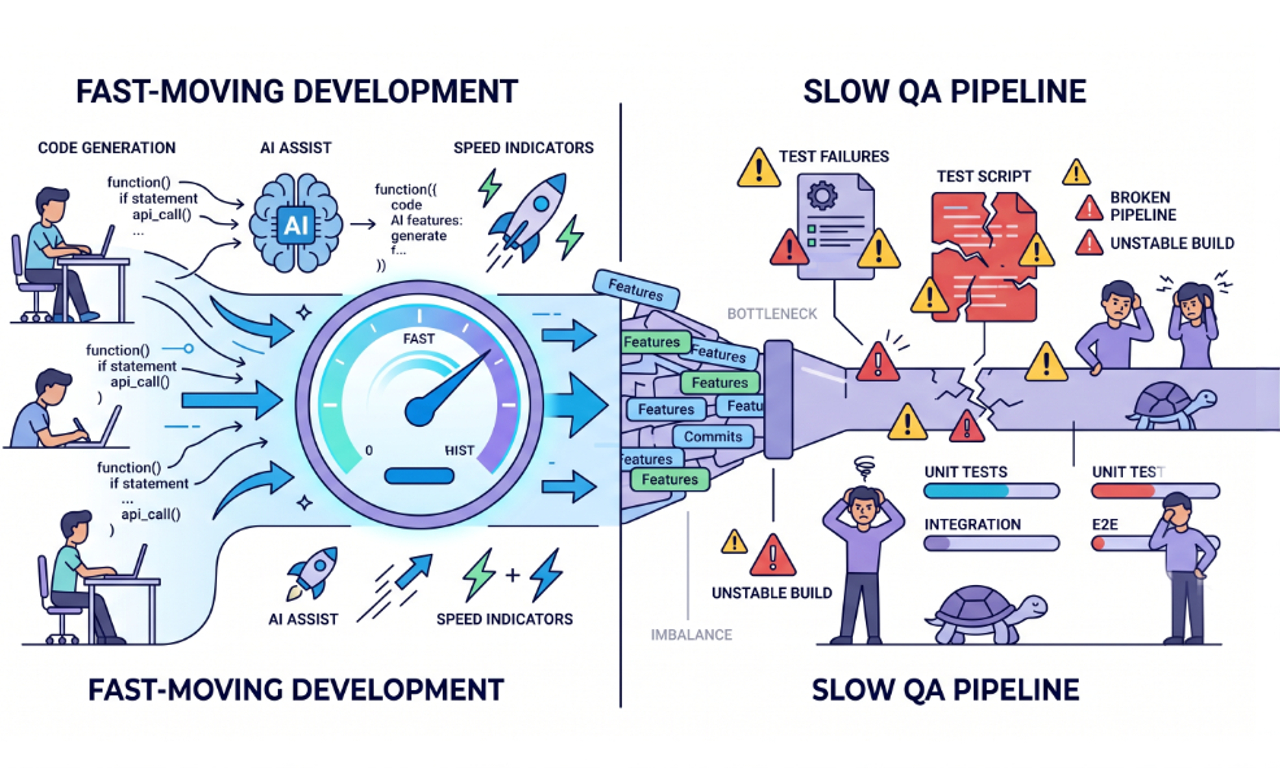

Manual test expansion doesn’t keep up with feature velocity.

Autonomous agents analyze:

- Code diffs

- Commit history

- Dependency maps

- Past defect patterns

Then generate risk-weighted test coverage automatically.

In real SaaS systems, regression scope often grows faster than QA capacity. Intelligent generation closes that gap.

2. Self-Healing Test Suites

One of the biggest hidden costs in QA is maintenance.

Traditional automation breaks when:

- UI selectors change

- DOM structure shifts

- Minor attributes update

Autonomous systems fix this by:

- Detecting selector drift

- Adapting dynamically

- Validating behavior instead of structure

Instead of constant fixing, QA shifts to supervision.

3. Risk-Based Test Prioritization

Not all tests matter equally.

AI-driven QA prioritizes based on:

- High-change modules

- Revenue-critical flows

- Defect history

- System dependencies

Traditional vs Autonomous QA

| Area |

Traditional QA |

Autonomous QA |

| Test Execution |

Full suite every time |

Risk-based selection |

| Maintenance |

Manual fixes |

Self-healing |

| Coverage |

Static |

Dynamic |

| Debugging |

Manual investigation |

AI-driven analysis |

This reduces test cycles without sacrificing confidence.

4. Intelligent Root Cause Analysis

Traditional pipelines say:

Test failed.

Autonomous agents ask:

- Is it UI regression?

- Backend issue?

- Data inconsistency?

- Environment instability?

By analyzing logs, commits, and patterns, AI reduces debugging time significantly.

In fast-moving teams, debugging often takes more time than development. This flips that equation.

5. Continuous Learning from Production

Staging never reflects real-world complexity.

Autonomous QA systems monitor:

- Production errors

- User behavior

- Performance anomalies

- Edge-case interactions

Then feed that data back into testing.

This creates a closed feedback loop, which is critical for scalable systems.